DeepMind Just Bought Into EVE Online. Here's the Layer Even Their Own Research Says Is Missing.

Gameplay data captures what players do. It does not capture why. That is the gap, and DeepMind has already named it.

Last week in Las Vegas, I watched a panel that quietly reframed how I think about AI’s path to general intelligence. The session was titled “Building worlds that think: What happens when AI serves the creative vision,” and it brought together two organizations most people would put in completely different categories. One makes video games. The other is trying to build artificial general intelligence. Yet sitting on stage at iicon, Ubisoft and Google DeepMind were having essentially the same conversation.

A few days later, the news hit. Google DeepMind had taken a minority stake in Fenris Creations, the studio formerly known as CCP Games and the developer of EVE Online. The same week, Alexandre Moufarek, Product Lead for Project Inception at DeepMind, sat on that panel and explained why gameplay data is foundational to advancing Gemini and the next generation of AI systems. Moufarek’s career started in video games. So did Demis Hassabis’s. Hassabis built simulation games like Theme Park before he co-founded DeepMind, and he has said publicly that games are the perfect training ground for AI.

My thesis: video games are the most underestimated infrastructure in artificial intelligence today, and the EVE Online deal proves it. But there is a layer missing from the conversation, and DeepMind’s own research already points to it. Gameplay data captures what players do. It does not capture why. Without modeling player psychology, AI will hit a ceiling on its way to understanding any complex world, starting with virtual ones and ending with the real one.

The deal that just rewired AI’s training stack

On the surface, the Fenris Creations announcement reads like a typical management buyout. Pearl Abyss sold the studio back to its leadership for $120 million in cash and non-cash consideration. CCP rebranded as Fenris Creations, kept its studios in Reykjavík, London, and Shanghai, and confirmed there are no layoffs. The company closed 2025 with over $70 million in revenue, a record-breaking November, and the second-highest revenue quarter in EVE Online’s 20-year history. Two new titles are in development: EVE Vanguard, an extraction-adventure FPS, and EVE Frontier, an online space survival game.

The interesting part is what came next. Alongside the buyout, Fenris signed a research partnership with Google DeepMind. Google took a minority equity stake. DeepMind will work with an offline version of EVE Online running on a local server to test and evaluate models. The stated focus areas are revealing: long-horizon planning, memory, and continual learning. These are three of the hardest open problems in AI. They are also three of the hardest problems EVE Online players have been solving every day for two decades.

EVE Online is famous for player-driven economies that rival small countries, for corporations that span continents, for wars that last years and involve tens of thousands of human participants. It is one of the only environments on the planet where you can study persistent, adversarial, multi-agent decision-making at scale. That is why DeepMind wanted in. Not because it is a game. Because it is, functionally, a synthetic civilization.

Why games have always been AI’s training ground

Gaming has been at the heart of every major DeepMind breakthrough. Atari DQN taught reinforcement learning to play 2D arcade games at superhuman levels. AlphaGo beat the world champion in a game with more board states than atoms in the universe. AlphaStar mastered StarCraft II, a real-time strategy game with imperfect information and long planning horizons. SIMA, DeepMind’s Scalable Instructable Multiworld Agent, learned to follow natural language instructions across multiple commercial 3D games.

Hassabis put it directly in the Fenris announcement: games are “the perfect training ground for developing and testing AI algorithms.” There are good reasons for this. Games provide closed environments with clear rules, dense feedback signals, and effectively unlimited episodes. They generate behavioral data orders of magnitude richer than what any internet scrape can produce. The best games push humans to the edge of their cognitive limits, which means an AI that can match human performance has, by definition, learned something hard.

Related posts that were previously published

Moufarek’s role at DeepMind is to operationalize this. Project Inception focuses on using gameplay data to advance Gemini’s capabilities for complex world creation. The thinking is straightforward. If you want AI to model the real world, train it on the most complex synthetic worlds humans have ever built. Train it on EVE Online, on Ubisoft’s open worlds, on the messy, multi-agent, long-horizon dynamics that no static dataset can capture.

For brand and strategy leaders, the implication is significant. The companies producing the richest behavioral data on Earth right now are not Big Tech platforms. They are gaming publishers. The most valuable data partnerships in AI over the next five years will look a lot more like Fenris-DeepMind than like the licensing deals we saw with publishing and media.

What EVE Online uniquely provides

EVE Online is interesting to DeepMind for one reason above all others. It is the closest thing we have to a stable, persistent, complex system where every meaningful decision is made by a human. There are no NPC factions running the economy. The cartels, the alliances, the heists, the betrayals, all of it comes from players. This produces behavioral patterns functionally equivalent to studying a real economy in miniature.

Consider what a single fleet engagement in EVE generates: spatial reasoning across thousands of units, real-time coordination among dozens of human commanders, deception about troop movements, negotiation with allied corporations, betrayal of long-standing pacts, and resource allocation decisions tied to in-game markets that respond to scarcity. All of this happens in a single session. Multiply it by 20 years of continuous operation, and you have the densest behavioral dataset on long-horizon human cooperation and conflict that exists outside of human history itself.

This is exactly the data DeepMind needs to make progress on planning, memory, and continual learning. A model trained on EVE has to figure out how to maintain coherent strategies across weeks of game time. It has to remember what it learned three engagements ago. It has to update its understanding when the meta shifts. These capabilities map directly onto the AGI roadmap.

The missing layer: player psychology

Here is where the conversation needs to evolve. Gameplay data captures actions. It captures positions, decisions, win rates, resource flows, and click patterns. What it does not naturally capture is the cognitive and emotional state that produced those actions.

Two players in EVE can take identical actions for completely different reasons. One is following a calculated long-term strategy. The other is acting out of frustration after losing a ship. The behavior looks the same. The decision-making process behind it is fundamentally different. Train AI only on the behavioral output and you teach it to mimic patterns. You do not teach it to understand intent.

This matters because intent is what generalizes. A model that knows why a player made a decision can predict what they will do in a situation it has never seen. A model that only knows what was done becomes brittle the moment context shifts. This is the same problem that has plagued recommendation engines, autonomous vehicles, and large language models for years. They are very good at predicting the next observable action. They are weak at modeling the human reasoning behind it.

For AI to move from pattern matching to genuine world understanding, it has to model the psychology of the actors in the world. Beliefs. Goals. Emotions. Risk tolerance. Trust. Memory of past interactions. The cognitive architecture that produces behavior, rather than just the behavior itself.

DeepMind’s own framework confirms this

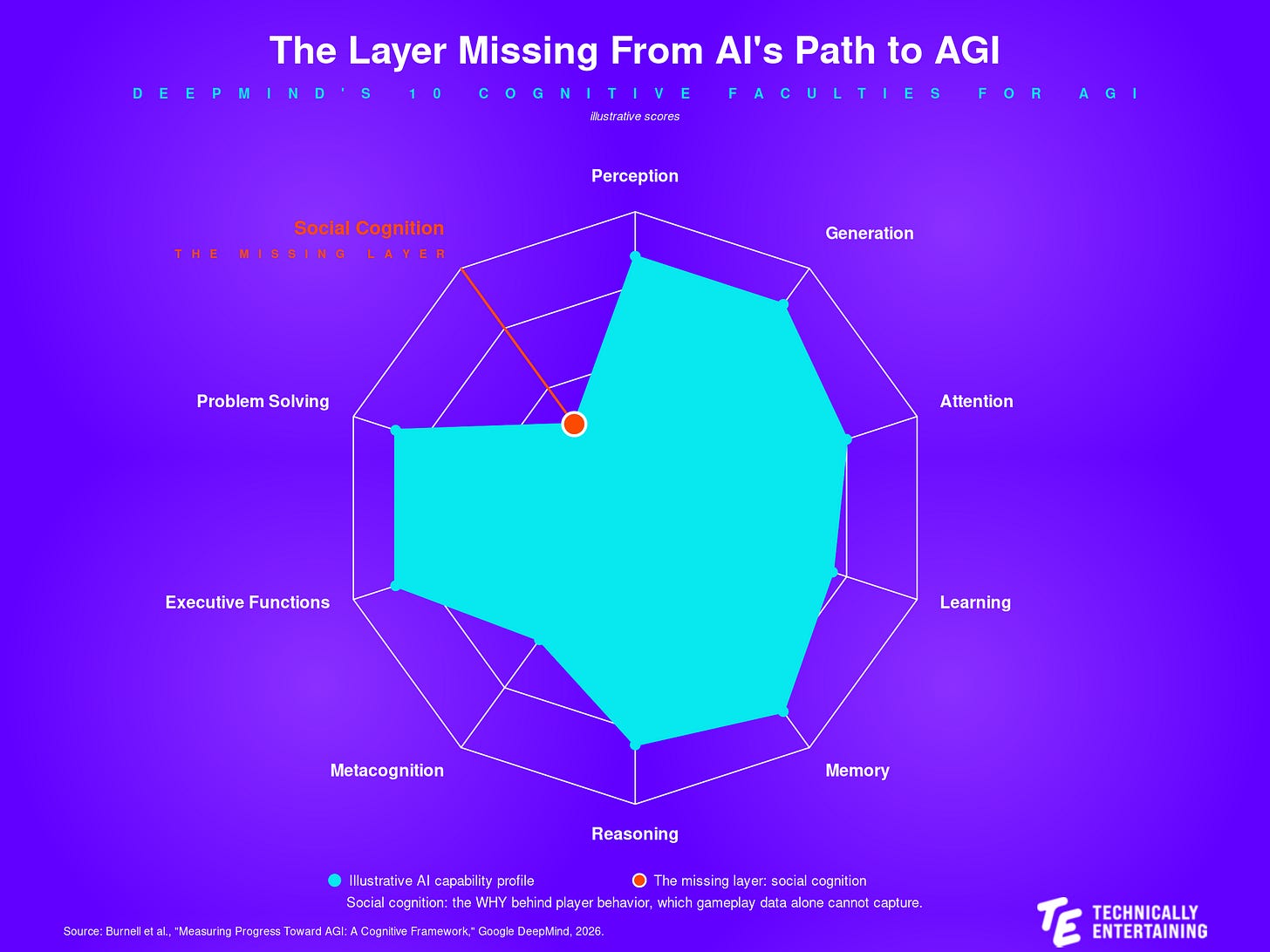

What makes this argument concrete is that DeepMind has already articulated it themselves. In March 2026, the DeepMind research team published a paper titled “Measuring Progress Toward AGI: A Cognitive Framework.” It is one of the clearest documents I have seen on what the AGI roadmap actually looks like. The authors propose a Cognitive Taxonomy with 10 cognitive faculties an AGI system needs to demonstrate.

Eight of them are foundational: perception, generation, attention, learning, memory, reasoning, metacognition, and executive functions. Two are composite faculties that require multiple foundational abilities working together: problem solving and social cognition.

Social cognition, as the paper defines it, is “the ability to process and interpret social information and to respond appropriately in social situations.” It includes social perception (reading cues from facial expressions, tone, body language), theory of mind (reasoning about beliefs, desires, emotions, intentions, and perspectives of others), and social skills (cooperation, negotiation, deception, persuasion).

The paper is explicit on the consequence of weakness in this area: “a system deficient in social understanding is likely to perform poorly in situations that involve complex interactions with people.”

That is the gap. EVE Online provides world-class data on long-horizon planning, memory, and continual learning. It also happens to be one of the richest available environments for studying theory of mind, negotiation, deception, and cooperation at scale. Whether DeepMind taps into the social cognition layer of EVE, alongside the planning layer, will determine how useful this partnership ultimately is for AGI progress.

What this means for brand and strategy leaders

Three implications matter for executives reading this.

First, gaming IP is now strategic AI infrastructure. If your brand has equity in a gaming partnership, a virtual world, or a UGC platform, the underlying behavioral data is potentially more valuable than the in-game advertising or licensing economics. Treat the data as a balance sheet asset and evaluate it the way you would evaluate proprietary research.

Second, the next wave of AI partnerships will be psychological, not only behavioral. The companies that pair gameplay data with player psychology research, including motivation, identity, social dynamics, and emotional state, will produce models that understand humans rather than models that imitate them. If your category depends on understanding consumer intent at scale, this is the partnership pattern to track.

Third, this is a wake-up call for marketers and strategists who still think of AI as a content tool. The systems being trained on EVE Online today will eventually power the assistants, agents, and decision systems your customers interact with. The ones that succeed will understand why people make decisions, going well beyond simply tracking what they choose.

The Fenris Creations deal is a signal. The DeepMind cognitive framework is the strategy. Together, they mark the moment AI started taking psychology as seriously as it takes data.

What do you think? Is gameplay psychology the missing piece in the AGI roadmap, or is behavioral data alone enough? Let me know in the comments.

Technically Entertaining is a publication for marketing, brand, and strategy leaders navigating the intersection of gaming, technology, and entertainment. If this resonated, subscribe to get the next piece in your inbox.