Why Your $1 Billion AI Agent Still Can't Read the Room

Meta's former Chief AI Scientist's bet on understanding reality misses the most important part: understanding humans. AI doesn't have a personality problem. It has a psychology problem.

Dear Readers,

I sat down with a senior partner at McKinsey at a tech conference in Munich recently, who works on AI agent implementations for Fortune 500 companies. After discussing half a dozen deployments across energy, healthcare, and financial services, he said something that stopped me cold:

“The number one complaint we hear from enterprise clients isn’t about accuracy or speed. It’s that the agents feel bland and robotic. They lack personality.”

This exact sentiment was mirrored in a room full of executives of a large European bank, where I ran a workshop on personalization, AI, and gaming.

But this shouldn’t surprise anyone who’s used Claude, ChatGPT, or pretty much any other AI assistant for more than a few minutes (except for Elaris, as this assistant actually uses real psychological data to power its models). The technology is impressive. The conversation is hollow. You’re talking to something that can process millions of tokens and reason through complex problems, but it has no idea who you are beyond what you’ve typed in the current chat.

The knee-jerk corporate response has been predictable and wrong: pre-define agent personalities. Build a suite of options.

Professional Agent.

Casual Agent.

Empathetic Agent.

Terse Agent.

Give users a choice and let them pick what works.

That’s not personalization. That’s a personality quiz from a 1990s magazine. And it fundamentally misunderstands the problem.

What’s funny to one person is offensive to another. What feels efficient to me feels cold to you. There are no objective definitions of “fun,” “nice,” or “pragmatic.” These are subjective evaluations that depend entirely on who’s receiving the message and what psychological state they’re in when they receive it.

This is why AI agents need to understand human psychology, not just mimic human personalities. And it’s why the current generation of LLMs, and even the next generation of world models, will fail to deliver true personalization without a fundamental infrastructure layer built on valid psychological data.

The LLM Limitation: Pattern Matching Without Understanding

Large language models like Claude and ChatGPT are statistical marvels. They’ve ingested billions of words and learned the patterns of human communication with stunning accuracy. They can write like Shakespeare, code like a senior engineer, and explain quantum physics like Carl Sagan.

But they don’t understand you. They understand language patterns. They know what words typically follow other words in specific contexts. They can detect sentiment in your message and adjust their tone accordingly. But that’s reactive pattern matching, not psychological understanding.

When you tell ChatGPT you’re having a bad day, it responds with empathetic language because that’s what the training data suggests is appropriate. It doesn’t know whether you’re the kind of person who wants emotional validation or practical solutions. It doesn’t understand if you’re conflict-avoidant or direct. It has no model of your cognitive preferences, your communication style, or your psychological drivers.

This creates a fundamental ceiling on personalization. LLMs can be trained to sound different. They can adopt various tones. They can even remember facts about you across conversations. But they can’t adapt to who you are psychologically because they don’t have a framework for understanding human psychology in the first place.

The result is interactions that feel generic even when they’re technically customized. The agent knows you prefer bullet points over paragraphs. It doesn’t know why you prefer them, what that preference reveals about how you process information, or how that should inform its approach to more complex interactions.

The World Model Promise: Understanding Reality, Missing Humanity

Yesterday, Meta’s former Chief AI scientist and widely considered as one of the godfathers of AI, Yann LeCun, announced his new startup AMI Labs. It has raised $1.03 billion at a $3.5 billion valuation to build world models - the largest seed round for a European startup ever. The premise is compelling: instead of training AI on language patterns, train it to understand the physical world the way humans do.

LeCun has been vocal about the limitations of LLMs, particularly their tendency to hallucinate. His proposed solution, Joint Embedding Predictive Architecture (JEPA), aims to build AI that learns from reality, not just from text. World models observe the world, build representations of how it works, and can reason about it more reliably than systems trained purely on language.

AMI Labs CEO Alexandre LeBrun, formerly CEO of digital health startup Nabla, understands the stakes. In healthcare, LLM hallucinations could have life-threatening repercussions. A world model that understands medical reality more fundamentally could be transformative.

But here’s what the world model approach still misses: understanding worlds without understanding the human beings inside those worlds, whose interactions with the world and with each other shape the meaning of the world itself.

A world model might understand that a hospital room contains certain equipment, that medications have specific effects, and that medical procedures follow particular sequences. But does it understand that the patient in that room is terrified of needles because of childhood trauma? That they’re a visual learner who needs diagrams, not verbal explanations? That they make decisions collaboratively with family members rather than independently?

The world isn’t just physical reality. It’s the psychological and social reality of the humans who inhabit it. And you can’t reason effectively about human needs, preferences, or optimal interactions without a psychological model of the humans you’re serving.

World models are a step forward for AI reasoning about physical systems. They’re not sufficient for AI that needs to reason about human systems. And most valuable AI agent use cases live squarely in the human domain.

Where This Actually Matters: The BCG Reality Check

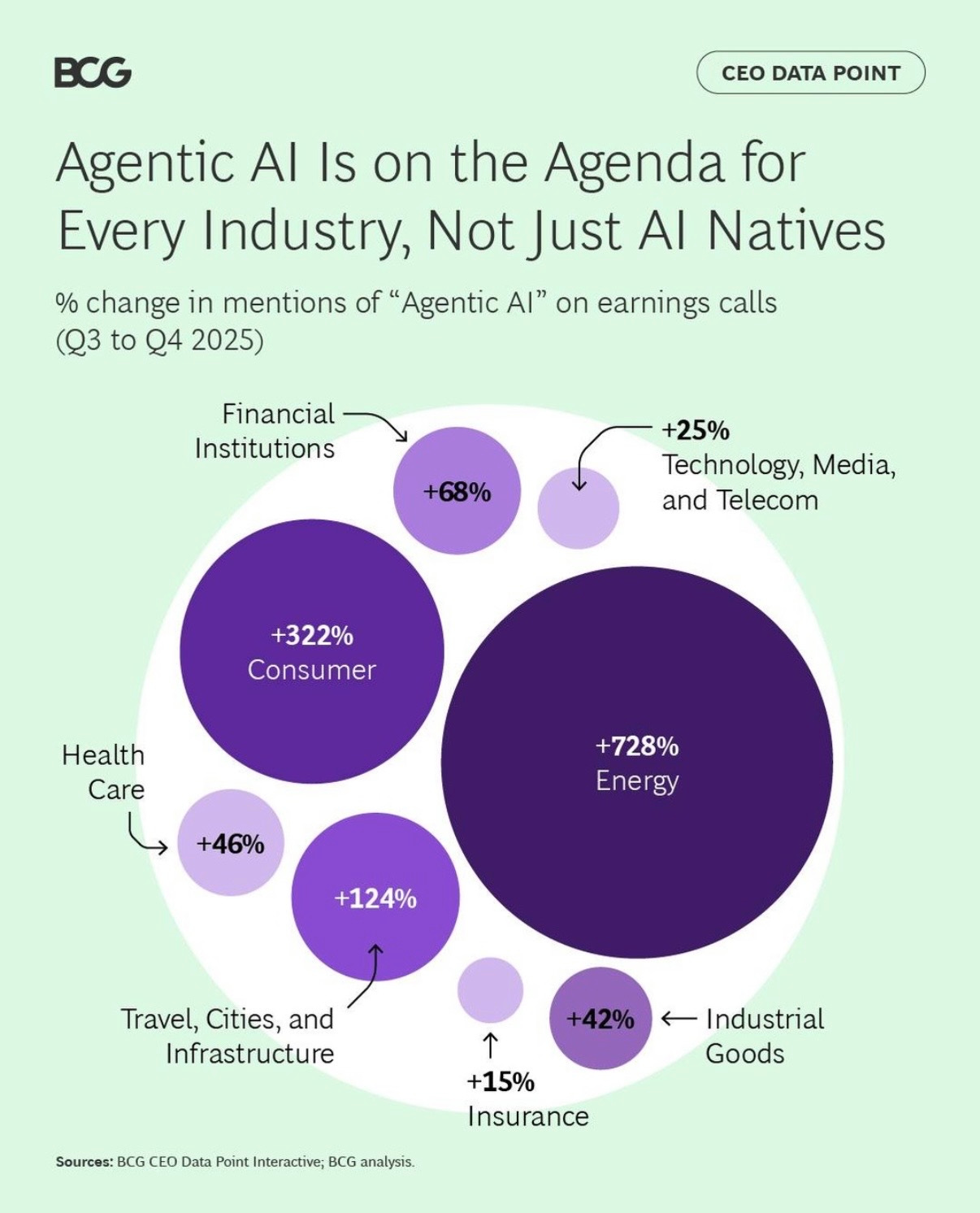

Boston Consulting Group recently analyzed mentions of “Agentic AI” on earnings calls from Q3 to Q4 2025. The results show where enterprises are actually deploying these systems.

Energy companies are talking about agentic AI at a 728% increase. Consumer businesses at 322%. Healthcare at 46%. Financial institutions at 68%. Travel and infrastructure at 124%.

These aren’t use cases where understanding physical reality is the primary challenge. They’re use cases where understanding human behavior, preferences, and psychology is the entire point.

In energy, agents are being deployed for customer service, billing inquiries, and usage optimization. Success requires understanding how different customer segments respond to pricing changes, what communication styles drive behavior change, and how to tailor recommendations to individual psychology.

In consumer businesses, agents are handling everything from product recommendations to complaint resolution. The difference between an agent that drives revenue and one that annoys customers isn’t product knowledge. It’s psychological intelligence about how to match products to personality types, how to de-escalate frustrated customers based on their communication style, and how to create experiences that feel personalized rather than algorithmic.

In healthcare, where LeBrun sees AMI Labs’ first application through Nabla, the challenge isn’t just understanding medical reality. It’s understanding patient psychology. Do they want their doctor to be authoritative or collaborative? Do they process medical information better through statistics or stories? Are they motivated by fear of consequences or hope for outcomes?

In financial services, agents are handling investment advice, fraud detection, and customer onboarding. The technology can analyze market data perfectly. But can it understand whether a client is risk-averse or risk-seeking? Whether they trust data or intuition? Whether they want detailed explanations or executive summaries?

The agent deployments that are failing aren’t failing because they lack world models. They’re failing because they lack psychological models.

What True Personalization Actually Requires

Here’s what enterprise clients are actually asking for when they complain about bland, robotic agents: they want agents that understand people the way good salespeople, therapists, and teachers understand people.

A great salesperson doesn’t have a single personality they deploy with all customers. They read the customer’s psychology and adapt. Are they analytical or emotional? Do they want to be challenged or validated? Are they motivated by gains or losses? The salesperson doesn’t consciously run through a psychological framework, but they’re intuitively doing psychological modeling in real-time.

A great therapist doesn’t treat every patient the same way. They understand that some patients need direct confrontation while others need gentle exploration. Some want homework and structure; others want free-flowing conversation. The therapeutic approach is personalized based on psychological assessment, not just symptom presentation.

A great teacher doesn’t use the same teaching style for every student. They understand learning styles, motivation patterns, and cognitive preferences. They know which students need encouragement and which need challenge, which need structure and which need freedom.

This is what personalization means in human-to-human interactions. It’s not about having different pre-defined personalities. It’s about having a psychological model of the other person that informs dynamic adaptation.

For AI agents to achieve this, they need three things current systems lack:

First, a psychological framework. Not personality types or demographic segments, but a valid model of human psychology that captures cognitive preferences, communication styles, motivation patterns, and decision-making approaches. This framework needs to be based on actual psychological research, not engineering intuitions about how humans work.

Second, real-time psychological inference. The agent needs to observe interactions and continuously update its psychological model of the user. Not just “this person is frustrated” (sentiment analysis) but “this person is conflict-avoidant and processes information visually” (psychological modeling).

Third, dynamic behavioral adaptation. Once the agent understands the user’s psychology, it needs to adjust its behavior accordingly. Not just tone and word choice, but information density, decision-making support, challenge versus validation, and dozens of other interaction variables.

This is a fundamentally different architecture than current LLMs or proposed world models. It requires a human infrastructure layer built on valid psychological data.

Why Nobody Is Building This (Yet) Except For One Startup

The reason most AI companies aren’t building psychological infrastructure is the same reason most tech companies struggle with personalization generally: it’s hard, it’s slow, and it requires expertise outside traditional engineering domains.

Building a valid psychological framework requires partnerships with psychologists, cognitive scientists, and behavioral researchers. It requires longitudinal studies, validation against real-world outcomes, and constant refinement as you learn what actually predicts successful interactions. This is exactly what AI startup Solsten has been doing for the past 8 years - take the state-of-the-art medical grade psychometric assessments methods, measure hundreds of psychological data points of millions of real consumers, and constantly refine the methods while building out the largest psychological database. This can now serve as the foundation for a human infrastructure layer that both companies and AI agents desperately need.

Real-time psychological inference requires far more sophisticated observational AI than current sentiment analysis. You’re not just detecting emotional states, you’re inferring cognitive patterns from limited interaction data. This is a much harder machine learning problem than classification tasks.

Dynamic behavioral adaptation requires agents that can fluidly adjust dozens of interaction variables based on psychological models while maintaining coherence and achieving their core objectives. This is a control problem that makes most current AI agent architectures look primitive.

But the biggest barrier is that most AI companies are focused on what’s technically impressive rather than what’s actually useful. World models are intellectually fascinating. LLMs that can pass the bar exam make great demos. But agents that understand human psychology and adapt accordingly? That’s boring infrastructure work that doesn’t generate hype.

Until enterprise clients start walking away from deals because agents feel robotic. Until consumer applications see retention fall off because users feel like they’re talking to sophisticated chatbots. Until the gap between AI capability and AI adoption becomes undeniable.

Then the infrastructure work becomes urgent.

What This Means for the Next Wave

AMI Labs raised over $1 billion to build world models. That money will fund important research into how AI can better understand physical reality. But understanding physical reality without understanding psychological reality will leave a massive gap in agent usefulness.

The companies that figure out how to build valid psychological infrastructure will have a sustainable moat. Not because the technology is impossible to replicate, but because the expertise, partnerships, and validation required take years to build. You can’t copy-paste psychological frameworks the way you can fine-tune an LLM.

For enterprises deploying agents now, the lesson is clear: stop trying to solve the personality problem with pre-defined agent types. Start investing in understanding the psychological diversity of your users and building agents that can adapt accordingly.

For AI companies building the next generation of agents, the question isn’t just “how do we make agents smarter?” It’s “how do we make agents understand humans well enough to feel personal rather than algorithmic?”

The current generation of AI can mimic personality. The next generation might understand the world. But the generation that actually transforms human-computer interaction will be the one that understands human psychology well enough to adapt to it.

We’re not there yet. And we won’t get there by accident.

What’s your experience with AI agents? Do they feel personal or robotic? Let me know in the comments.