Why Advertising Money Will Force Roblox to Fix Child Safety (Not Regulators)

Roblox’s CEO was recently grilled over the company’s child safety efforts. Roblox is pushing boundaries to keep its platform safe. What will ultimately get it done are the economics of advertising.

Dear Readers,

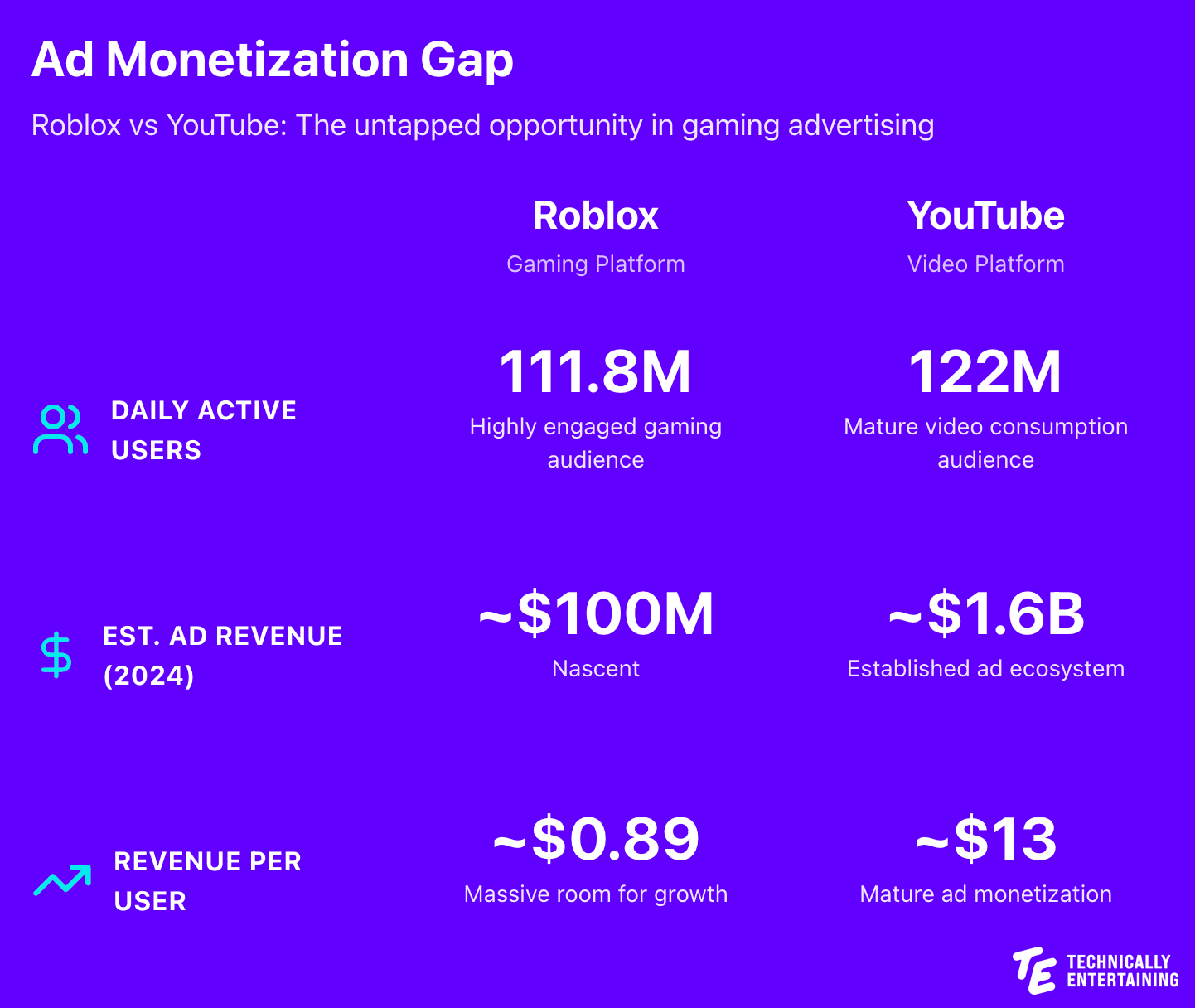

Roblox finds itself at a fascinating crossroads. The platform that has become the de facto digital playground for an entire generation (with 111.8 million daily active users who spend an average of 2.7 hours per day on the platform) is simultaneously pushing technological boundaries in child safety while facing mounting pressure to prove it can keep its youngest users safe.

The stakes couldn’t be higher, not just for Roblox as a business, but for the entire gaming ecosystem. As the first major platform to truly crack the code on persistent virtual worlds for children, Roblox has a responsibility that extends far beyond its own platform. The precedents it sets, the technologies it develops, and the standards it establishes will shape how the entire industry approaches child safety in immersive digital environments.

In a highly publicized and commented on appearance on the famous New York Times podcast Hard Fork, Roblox CEO David Baszucki went on to discuss the company’s child safety measures, the technological progress it’s making towards solving it, as well as touting Roblox’s recent growth. He was put, and that’s putting it mildly, through the wringer in a showing that ended up being a huge missed opportunity for Roblox and gaming at large. In full transparency, I’m not in the camp of slamming the journalists. Baszucki’s PR team booked the appearance with the explicit focus on child safety and the Hard Fork team did its job given the gravity of the topic. Baszucki had his talking points and on most questions he did OK. On others, the responses he offered felt simply like rehearsed PR salvos he was waiting to fire off, resulting in numerous moments that seemed tone deaf. But feel free to watch the podcast and decide for yourself.

I do believe that neither Roblox or its CEO are the villains here either. Can more be done? Absolutely. Are they already doing a lot? Absolutely. But aside from lawsuits and bad PR, here’s what will ultimately drive Roblox to go to the absolute extremes of child safety: advertising revenue.

The Reality Check

Let’s be clear about what Roblox is dealing with. The platform hosts over 70 million user-generated experiences, with thousands of new ones created daily. It’s an unprecedented scale of content moderation.

Recent developments have highlighted the urgency. Since July, the law firm Dolman Law Group has filed six lawsuits in courts in Georgia, California and Texas claiming damages due to perceived child safety issues. In November, Texas filed suit against Roblox for allegedly “putting paedophiles and profits” over safety. A 270,000-signature Change.org petition has demanded the resignation of CEO David Baszucki.

These aren’t theoretical concerns. They’re real challenges that Roblox must address because the safety of millions of children depends on getting this right.

The Ecosystem Responsibility

Roblox isn’t just another gaming platform. It’s the blueprint for what immersive, social, user-generated virtual worlds look like for the next generation. When Roblox makes a decision about child safety (whether that’s implementing age verification, restricting social features, or developing new AI moderation tools) it’s setting expectations for every company building in this space.

Epic Games is watching. Meta is watching. Unity is watching. If Roblox can solve real-time moderation of user-generated content at scale, it provides a roadmap for the entire industry. If it fails, it creates a cautionary tale that could set back innovation in social gaming for years.

The company is essentially conducting R&D for the entire gaming industry on how to keep children safe in persistent virtual worlds. The technologies they develop, the policies they implement, and the standards they establish become the baseline for everyone else.

Pushing Technological Boundaries

The company isn’t ignoring the issue. In November 2024, Roblox announced it would block children from chatting to adult strangers, making it the first large gaming platform to require facial age verification for accessing chat features. Starting in December 2024 for select countries and January 2025 globally, mandatory age checks will be introduced for all accounts using chat features.

The facial age verification technology uses the device’s camera to estimate a user’s age, with Roblox’s chief safety officer Matt Kaufman claiming the system can make estimates “within one to two years” for users aged between five and 25. Images are processed by an external provider and deleted immediately after verification. Users will be placed into age groups: under nine, 9 to 12, 13 to 15, 16 to 17, 18 to 20 and 21+, with players only able to chat with others in similar age ranges unless they add someone as a “trusted connection.”

At the September 2024 Roblox Developers Conference, the company announced additional safety updates including blocking any communication between adults and minors who don’t know each other in real life, updating maturity ratings, and banning sexual content entirely.

This is cutting-edge technology that didn’t exist five years ago and is being developed largely because Roblox needs it to exist. The company is deploying computer vision systems that can understand 3D environments, natural language processing that can detect concerning patterns in chat, and behavioral AI that can identify bad actors based on how they move through virtual spaces.

But Here’s What Will Really Force the Issue: Advertising

As impressive as Roblox’s safety investments are, there’s a harder truth: the company needs advertising revenue to achieve its growth ambitions, and brands have zero tolerance for safety concerns.

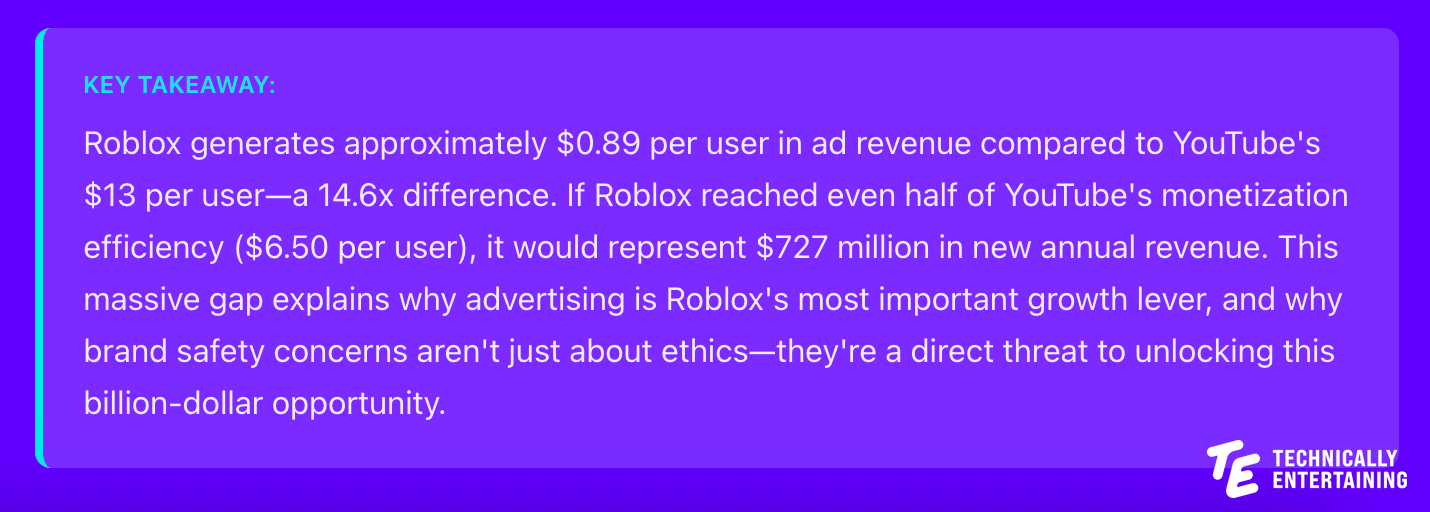

Roblox’s current business model relies heavily on users purchasing Robux (the platform’s virtual currency) to buy virtual items and experiences. It generated $3.6 billion in revenue in 2024. The company is forecasting bookings for year-end 2025 between $6.57 billion and $6.62 billion. But to reach the scale Roblox is aiming for, they need advertising revenue. Morgan Stanley analysts predict that Roblox’s ad revenue could reach $1.2 billion by 2026.

The advertising opportunity is massive. Brands are desperate to reach Gen Z and Gen Alpha audiences in authentic ways. According to GEEIQ’s 2025 State of Brands in Gaming report, the total number of brands activating inside virtual worlds increased from 558 to 828 year over year between 2023 and 2024, with 47% of those activations taking place inside Roblox.

But brands have extraordinarily high standards for brand safety. Research from Trusted Media Brands in partnership with Advertiser Perceptions found that more than 75% of brand marketers believe that brand safety impacts return on investment. The same study found that advertising in brand-safe environments is believed to drive significant impact on key measures including audience quality (83%), brand equity (82%), and brand lift (79%).

The Brand Safety Standard

Major advertisers operate under what’s essentially a zero-tolerance policy for brand safety issues. It’s not enough for a platform to be “mostly safe” or “safer than alternatives.” For a brand to commit serious advertising budgets, they need to be confident that their association with the platform won’t create any reputational risk whatsoever.

The financial consequences of failing to meet this standard are severe. After Elon Musk’s acquisition of Twitter (now X), major advertisers fled the platform over content moderation concerns. X’s U.S. ad revenue dropped 55% in 2023, falling from approximately $4.1 billion to $1.9 billion. The exodus included major brands like Apple, Disney, IBM, and Comcast, all citing brand safety concerns. This represented a direct loss of over $2 billion in annual revenue because advertisers perceived the platform as too risky for their brands.

This reality is already affecting Roblox’s advertising business. Agencies trying to onboard new advertisers report that it’s becoming harder to convince brands to invest without third-party measurement tools and stronger safety assurances. As one Roblox creator, Empyror, noted: “As it stands, Roblox is deeply associated with poor moderation and child-safety concerns. They will have to get rid of that in some way in order to truly attract brands once again.”

The age composition of Roblox’s user base compounds the challenge. While 64% of all Roblox users are now over 13, roughly one-third remain under 13. U.S. regulations restrict how companies may advertise to children, especially those under age 13, and Roblox disables targeted ads for younger users. But mistakes happen. Ads for games and films were visible to platform users as young as five, prompting watchdog groups like Truth In Advertising to file complaints with the Federal Trade Commission.

Consider what happened with YouTube. The platform faced multiple “adpocalypse” events where brands pulled advertising en masse due to concerns about ad placement next to inappropriate content. Each time, YouTube had to implement increasingly strict content policies and sophisticated brand safety tools. The financial impact was immediate and painful, which created the corporate imperative to solve the problem.

Roblox is heading toward a similar inflection point. The more they push into advertising, the more they’ll face brand safety scrutiny. And that scrutiny will force them to implement safety measures that go beyond what might be strictly necessary to satisfy users or even regulators.

The Economic Forcing Function

You might think it’s cynical to suggest that money will drive safety improvements more than ethical responsibility or regulatory pressure. But it’s also pragmatic.

Regulatory pressure moves slowly and often results in compliance-oriented solutions that check boxes without fundamentally improving outcomes. Ethical responsibility is real and meaningful, but in public companies, it often loses out to other priorities when resources are constrained.

But advertising revenue? That’s immediate, measurable, and directly tied to executive compensation and stock price. When brand safety concerns cost you hundreds of millions in advertising revenue, solving those concerns becomes the top corporate priority.

The math is compelling. If Roblox generated even $5 to $10 in annual ad revenue per user (well below YouTube’s $13 per user) that could mean $560 million to $1.1 billion in new revenue. And crucially, advertising would likely be a higher-margin business than Robux sales, which involve payment processing fees and significant revenue sharing with developers.

This isn’t to say Roblox doesn’t care about child safety for ethical reasons. They clearly do, given their investments to date. But the advertising model creates an economic forcing function that aligns corporate incentives with safety outcomes in a uniquely powerful way.

What Comes Next

As Roblox pushes harder into advertising, expect dramatically increased investment in safety technologies. Not just incremental improvements, but breakthrough solutions. The facial age verification system is just the beginning. Expect more sophisticated AI moderation systems that can understand context in 3D environments in real-time.

More restrictive policies will follow, especially for younger users. The chat restrictions announced in November are a preview. Expect tighter controls on social features, more age-gated experiences, and possibly even separate “zones” of the platform with different safety standards.

Greater transparency and third-party verification are coming. Roblox has already begun working with firms like DoubleVerify, Nielsen, Kantar, and IAS to provide third-party measurement and brand safety verification. Expect this to expand significantly as brands demand independent audits and transparent reporting on safety metrics.

As the leader in this space, Roblox’s safety implementations will likely become the baseline that other platforms are measured against. What they develop becomes the industry standard, whether for virtual worlds, gaming platforms, or any social environment targeting younger users.

The Bigger Picture

Here’s the ultimate irony: the commercial imperative of advertising may be exactly what drives Roblox to create the safest possible environment for children in virtual worlds.

Roblox has a responsibility to the gaming ecosystem to get this right. They’re building the template for how hundreds of millions of children will experience social, immersive digital environments. The safety measures they implement, the technologies they develop, and the standards they establish will shape the industry for a decade or more.

But what will truly push them to the extremes of safety isn’t just that responsibility. It’s the fact that brands won’t advertise on a platform they perceive as risky. And brands are the key to unlocking the scale and revenue that Roblox needs to achieve its ambitions.

As Stagwell CEO Mark Penn noted in research on brand safety, marketers have “bought into this idea” that certain environments might be “too controversial” or risky for their brands. For Roblox, overcoming that perception isn’t optional. It’s existential.

This might be the best possible outcome. A forcing function that aligns corporate self-interest with child safety. Where making the platform as safe as humanly possible isn’t just the right thing to do but the only path to the business model that Roblox is building toward.

The question isn’t whether Roblox will push safety to the extremes. If they want those advertising dollars (and they absolutely do) they have no choice. The question is whether they’ll move fast enough, whether the technology can keep pace with the scale, and whether the standards they establish will be sufficient not just for their platform, but for the entire ecosystem they’re helping to create.

For the sake of the 111.8 million daily users, the majority of whom are children, we should hope the answer is yes.

AI bubble fears, Hollywood and Games, the big engine embrace, Adidas and Minecraft, predictions - we have a packed topic pipeline to close out 2025. Be sure to subscribe today to not miss out on the action and finish the year on top.