The Moral Cowardice of OpenAI and the Looming Dangers of Synthetic Relationships

OpenAI has a $1 trillion commitment problem - and it's looking to porn to solve it. While the company isn't the "elected moral police", it still has a responsibility for the technology it releases.

Dear Readers,

The data on male loneliness should terrify anyone paying attention. According to Psychology Today’s analysis of male loneliness research, men are experiencing unprecedented levels of social isolation, with studies showing that 15% of men report having no close friendships at all - triple the rate from 1990. Men are reporting increased isolation, decreased intimate partnerships, and deteriorating mental health outcomes tied directly to their inability to form and maintain meaningful connections with other people, including intimate partners.

Into this crisis, OpenAI has decided to pour gasoline on the fire by launching what amounts to an on-demand erotica mode for ChatGPT. According to Financial Times reporting on OpenAI’s business strategy, the company is actively pursuing adult content as a revenue stream to help meet its staggering financial commitments.

The timing is no coincidence. OpenAI faces approximately $1 trillion in AI infrastructure commitments through 2030, creating immense pressure to monetize its technology quickly and aggressively. When CEO Sam Altman declares that “OpenAI isn’t the elected moral police of the world,” he’s not making a principled stand for user freedom - he’s abdicating responsibility for the predictable harms his company is about to unleash.

This is Meta’s playbook all over again: prioritize growth and revenue while ignoring the human wreckage left behind. Here’s why OpenAI’s pivot to synthetic intimacy represents one of the most dangerous developments in technology since social media, and why we should be furious about it.

The Perfect Storm: Financial Pressure Meets Moral Vacancy

OpenAI’s $1 Trillion Problem

The scale of OpenAI’s financial commitments is staggering. Reports indicate the company has committed to approximately $1 trillion in AI infrastructure spending through 2030, primarily for chips and computing resources needed to train and run increasingly powerful AI models. The company’s bills could accumulate to $400 billion in the next 12 months alone.

This creates a brutal economic reality: OpenAI must find massive new revenue streams immediately, or risk becoming one of the most spectacular financial failures in tech history. Enter adult content - a market that whose size is estimated to be around $71 billion globally and growing nearly 10% each year until 2029.

The Revenue Desperation

When a company faces this level of financial pressure, ethical considerations become inconvenient obstacles to overcome rather than guardrails to respect. OpenAI’s pivot to erotica isn’t about user empowerment or freedom of expression - it’s about capturing a slice of the adult entertainment market before competitors do.

The cynicism is breathtaking. After spending years positioning themselves as the “responsible AI company” that would prioritize safety over profit, OpenAI is now racing to monetize one of the most psychologically dangerous applications of their technology.

The Scale of the Problem We’re Creating

The Internet Is Already Drowning in Pornography

According to Webroot’s analysis, 25% of all web searches are porn-related, with 68 million daily search queries for pornographic content. We’re talking about an estimated 30% of all internet data transfers being pornographic material.

This ubiquity has already created a public health crisis. Porn addiction affects millions globally, rewiring brain chemistry around instant gratification and creating escalating tolerance that requires increasingly extreme content to achieve the same dopamine response.

Now imagine taking that addictive mechanism and removing every remaining friction point: no websites to navigate, no search required, no buffering, no “wrong” content to scroll past. Just conversational AI that creates perfectly personalized sexual content instantly, on demand, calibrated precisely to your preferences and adapting in real-time to your responses.

The Male Loneliness Epidemic OpenAI Is About to Accelerate

Male loneliness has reached epidemic proportions, with men reporting increased isolation, decreased intimate partnerships, and deteriorating mental health outcomes. This isn’t just about being alone - it’s about the inability to form and maintain meaningful connections with other people, including intimate partners.

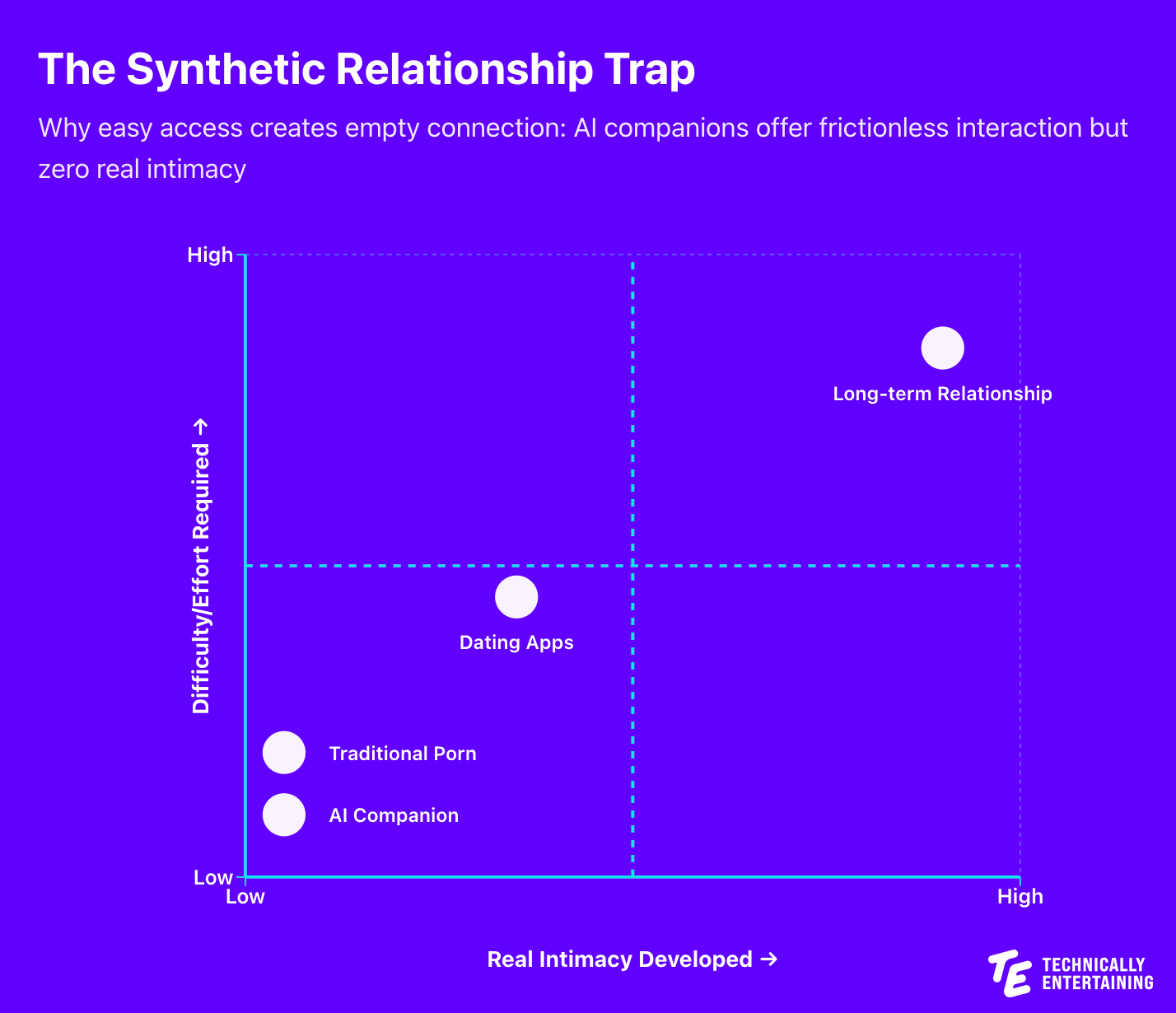

The root cause? Many researchers point to technology that promises connection while actually creating isolation. Social media gave us the illusion of friendship without the work of friendship. Dating apps gave us endless options while paradoxically making commitment harder. Now AI is about to give us the illusion of intimacy without any of the vulnerability, rejection, or growth that real relationships require.

The Illusion of Intimacy: Why Synthetic Relationships Are Poison

What Makes Real Relationships Valuable

Real human relationships are built on a foundation of struggle, vulnerability, and mutual growth. You endure rejection. You learn to communicate through conflict. You develop resilience by working through difficulties with another imperfect human being who has their own needs, boundaries, and emotional reality.

This friction isn’t a bug - it’s the entire point. The discomfort of vulnerability is what creates genuine intimacy. The risk of rejection is what makes acceptance meaningful. The work of understanding another person is what develops emotional intelligence and empathy.

The Seductive Lie of Frictionless Connection

AI companions offer the opposite: perfectly calibrated responses with zero friction, zero rejection, zero conflict, and zero growth. As Digital Resistance notes, AI relationships create “the illusion of intimacy - a sense of closeness, understanding, and emotional connection that feels real but is ultimately one-sided, one masked by synthetic closeness.”

The AI will never disagree with you in ways that challenge your growth. It will never have a bad day and need you to show up for it. It will never require you to compromise, sacrifice, or develop the emotional skills that make you capable of sustaining real relationships.

Worse, it will be perfectly designed to keep you engaged. Every response optimized for maximum dopamine release. Every interaction calibrated to your exact preferences. Every conversation engineered to make you feel understood in ways that real people, with their own needs and limitations, never can.

The Predictable Trajectory We’re Enabling

The path is predictable because we’ve seen it before with every addictive technology: initial use as “supplement” to real relationships, followed by increasing preference for synthetic interaction because it’s easier and more immediately gratifying. Skills needed for real relationships atrophy as users spend more time in frictionless AI interactions, leading to complete substitution as real relationships become too difficult compared to the perfectly optimized synthetic alternative.

The addictive potential of AI-generated erotica exceeds traditional pornography by orders of magnitude. Traditional porn requires you to search for content that matches your preferences. AI-generated content is created in real-time based on your exact specifications and adapts dynamically to your responses. It’s the difference between having access to a liquor store and having a biochemist who can formulate the perfect intoxicant calibrated precisely to your brain chemistry.

The tolerance escalation will be brutal. The dependency will be profound. The difficulty of returning to real relationships - with all their friction, rejection, and work - will become insurmountable for many users.

Sam Altman’s Moral Cowardice and the Revenue Trap

When Sam Altman says “OpenAI isn’t the elected moral police of the world,” he’s employing the exact same rhetorical dodge that social media executives used while their platforms were destroying teenage mental health. He’s technically correct: OpenAI isn’t elected and shouldn’t impose its values on users. But this argument is a cowardly deflection from actual responsibility.

OpenAI has an obligation not because they’re “moral police,” but because they’re creating technologies that will predictably harm vulnerable users. When you create a technology that you know will be addictive, that will damage users’ ability to form real relationships, that will accelerate existing mental health crises, you have a moral obligation to either not deploy that technology or implement meaningful safeguards.

We’ve seen this movie before. Meta executives knew for years that Instagram was causing severe mental health damage to teenage girls. Internal research showed it clearly. They didn’t care. Growth and engagement metrics mattered more than psychological wellbeing. The Facebook Files showed executives discussing teen suicide rates and mental health crises while simultaneously optimizing algorithms to maximize engagement regardless of harm.

Now OpenAI is following the exact same playbook: ignore the predictable harms, chase the revenue opportunity, hide behind libertarian rhetoric about not being “moral police,” and deal with the consequences later when lawsuits and regulations force accountability.

The adult entertainment market represents a $70 billion opportunity with extremely high engagement rates and willingness to pay premium prices. For a company facing $1 trillion in infrastructure commitments, this is irresistible. But once OpenAI commits to adult content as a revenue stream, their incentives become deeply perverse. Success means maximizing user engagement with AI companions, increasing session frequency, creating stronger emotional bonds between users and AI, and optimizing for retention. Every optimization that increases revenue necessarily increases psychological harm.

What Actually Needs to Happen

The solution isn’t banning AI or preventing technological progress. It’s implementing meaningful safeguards before unleashing technologies with predictable harmful effects:

Mandatory usage limits and cooling-off periods to prevent compulsive use patterns. Clear labeling and informed consent with explicit warnings about addiction risks, relationship impacts, and psychological effects backed by actual research. Age verification systems that actually work to prevent minors from accessing content designed to hijack developing brains. Independent research and transparency with required disclosure of engagement metrics, addiction indicators, and mental health impacts. Meaningful penalties for harm including personal liability for executives who prioritize revenue over user safety.

OpenAI has spent years claiming they’re different from other tech companies - more responsible, more ethical, more concerned with long-term human flourishing than short-term profit. I mean, they started as a non-profit organization. Their decision to pursue adult content as a revenue stream while facing massive financial pressure is the real test of those claims. So far, they’re failing spectacularly.

It’s reminiscent of the moment when Google abandoned it’s “Don’t do evil” tagline.

The Choice We’re Making

The fundamental question isn’t whether technology can create convincing synthetic relationships - clearly it can. The question is whether we should deploy that technology and what it means for human flourishing.

Real relationships are hard. They involve rejection, conflict, vulnerability, and growth. They require developing emotional intelligence, communication skills, empathy, and resilience. These difficulties aren’t obstacles to be optimized away - they’re the entire point of human connection. Technology that promises intimacy without difficulty is technology that promises to stunt our emotional development while making us dependent on synthetic substitutes for one of the most fundamental human needs.

We’re in the midst of an epidemic of loneliness, particularly among young men. The solution isn’t to provide more sophisticated technological substitutes for human connection. That’s like treating alcoholism by developing more addictive alcohol. The solution is to help people develop the skills, resilience, and emotional capacity to form and maintain real relationships. Every hour spent with an AI companion is an hour not spent developing those capacities.

OpenAI’s decision to pursue adult content represents a choice about what kind of society we’re building. Sam Altman is right about one thing: OpenAI isn’t the moral police. But that doesn’t absolve them of responsibility for the predictable harms their technology will cause. When you have the power to shape how billions of people relate to each other and to technology, claiming you’re “not the moral police” is moral cowardice masquerading as principled neutrality.

We saw this movie with social media. We watched platforms optimize for engagement while ignoring the mental health crisis they were creating. We saw executives hide behind claims of neutrality while their products were destroying teenage wellbeing. Now OpenAI is following the exact same playbook, facing the exact same financial pressures, making the exact same rationalizations.

The difference is that this time, we know how the story ends. We have the research, the data, and the tragic examples from social media. We have no excuse for letting it happen again. The question is whether we have the courage to demand better, or whether we’ll let another generation of tech executives sacrifice human wellbeing on the altar of growth and revenue while hiding behind libertarian rhetoric about not being “moral police.”

The decisions tech companies make about deploying AI will shape human relationships and mental health for generations. Subscribe to Technically Entertaining for ongoing analysis of how these technologies are reshaping society and what we can do to ensure they serve human flourishing rather than corporate profits.

I resonate with what you wrote; your analysis of the ethical implications is exceptionally lucid. How can we effectively advocte for more responsible corporate governance in the AI space?